I’ve just released my Learn to read Korean app for iPhone. It uses a number of child View Controllers in the home screen. While child View Controllers are not a new thing, it was a new experience for me, and I greatly recommend them to reduce the clutter of your View Controllers.

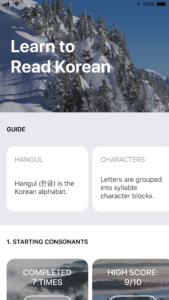

Here’s a photo of my home screen:

The main view controller consists of a vertical UIScrollView and multiple horizontal scrolling UICollectionViews below. While it’s possible to do it all in one massive View Controller, it’s much better to delegate UICollectionView events to their individual child View Controllers.

The good news is that using child UIViewControllers is super easy. You can use your Storyboard or do it programmatically in your UIViewController files. I opted for the latter as I find it easier to reproduce across Xcode projects.

All you need to do to add a child View Controller is below. I included an optional constraints section.

// Create child VC

let childVC = UIViewController()

// Set child VC

self.addChildViewController(childVC)

// Add child VC's view to parent

self.view.addSubview(childVC.view)

// Register child VC

childVC.didMove(toParentViewController: self)

// Setup constraints for layout

childVC.view.translatesAutoresizingMaskIntoConstraints = false

childVC.view.topAnchor.constraint(equalTo: heroView.bottomAnchor).isActive = true

childVC.view.leftAnchor.constraint(equalTo: self.view.leftAnchor).isActive = true

childVC.view.widthAnchor.constraint(equalTo: self.view.widthAnchor).isActive = true

childVC.view.heightAnchor.constraint(equalToConstant: height).isActive = true

With multiple child VCs (each handling their own UICollectionView events), the code base becomes manageable. In each child View Controller, you can handle customization, such as background color, UILabels, UIButtons, etc.

Another tip I have is to use the UIView’s convert(_:to:) method as necessary. You may need to get the child subview’s position relative to your parent View Controller’s view (such as for an UIViewControllerTransitioningDelegate). The code for that is simple too:

// contrived example label in Child VC to get parent frame

let label = UILabel()

let childViewFrame = label.frame

let frameInParent = label.convert(childViewFrame, to: parentVC.view)

That’s all I wanted to share for today. Don’t be afraid of using child View Controllers to break up your massive View Controllers!